Introduction

As we enter the digital age, the complex interplay between AI and GDPR is growing. AI is already embedded in our everyday lives, integrating functionality into everything from virtual personal assistants to predictive analytics across several industries. Its reliance on gathering, storing, and analysing massive quantities of data has raised privacy concerns.

The EU implemented GDPR in May 2018 to harmonise European data privacy rules. Its primary objective is to protect individuals’ privacy and change how companies handle data privacy. GDPR principles, including data reduction and purpose restriction, affect how AI apps interact with EU users’ data. Individuals may also access, edit, and delete their data under the legislation.

The abundance of data AI systems collect and analyse may improve their functions and provide personalised user experiences. Additionally, these systems must comply with GDPR to protect people’s data rights. In an AI-enhanced world, AI and GDPR provide a complex matrix of problems and opportunities for enterprises and people, forcing us to rethink how we manage personal data.

Understanding AI and its Recent Development in 2023

AI is predicted to increase rapidly in 2023, including natural language processing, generative AI, and automated machine learning. E-commerce, healthcare, transportation, and manufacturing employ AI to improve user experience, efficiency, and productivity. AI adoption is estimated to enhance global GDP by 14% by 2030, or $15.7 trillion.

The influence of AI on society and enterprises is considerable. Thus, responsible and ethical AI development and deployment are essential. In 2023, the AI sector will emphasise explainable AI regulations, compliance, and ethics. However, AI faces issues including ethics, legislation, and AI-human cooperation.

Understanding GDPR

The GDPR allows people to control their personal data. In 2018, it harmonised data protection laws in all EU member states, including Iceland, Lichtenstein, Norway, and Switzerland.

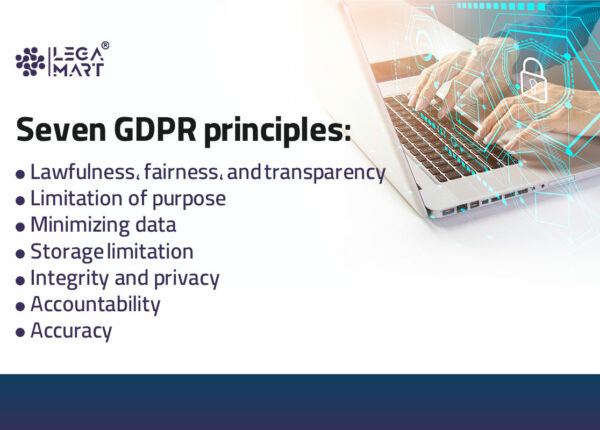

The GDPR is one of the most significant and comprehensive worldwide efforts to control government and commercial sector data collection and usage. It has prompted corporations to prioritise “privacy by design” in data privacy. The six GDPR principles govern data handling. The principles are:

- Lawfulness, fairness, and transparency

- Purpose limitation

- Data minimization

- Accuracy

- Storage limitation

- Accountability

- Integrity and confidentiality

These principles are not laws but a framework for GDPR’s general goals.

The GDPR encourages enterprises to carefully consider what personal data to collect, retain, and handle on EU individuals. If the organisation obtains personal data, it must comprehend the GDPR and its usage.

GDPR has transformed how firms manage consumer privacy and given users new online data access and control rights. Businesses should get express permission before collecting and using personal data. A Data Protection Officer (DPO) is required if they handle vast volumes of personal data or sensitive data like health information. Also, businesses must notify authorities of data breaches within 72 hours.

What does GDPR say about AI?

Regarding GDPR, it contains terms referring to the internet, such as social networks, websites, links, etc. However, the term artificial intelligence or any other related concept to AI has not been included within GDPR. This is reflective of the fact that the main focus of GDPR is the challenges that emerge from the internet, which were not previously considered by the 1995 Data Protection Directive. However, while it might not directly signify having a relationship with AI, various provisions in the GDPR are also relevant to the use of AI.

Profiling and automated individual decision-making

Various provisions present in the GDPR affect AI-based decisions for individuals. This mainly covers the automated decision-making and profiling procedures, mainly covered in Article 22 of the GDPR. This article poses a general restriction on automated decision-making and profiling procedures. The article is applicable in a situation where automated processing and profiling end up producing a legal effect or having a significant effect on the data subject. Further, stricter GDPR requirements have been included under Article 15 of the GDPR about the narrow scope of Article 22. These are inclusive of the following:

The “existence” of automated decision-making, including profiling

“meaningful information about the logic involved.”

“The significance and the envisaged consequences of such processing” for the individual

It is important to note that if Article 22 does not apply to any of the procedures, the above requirements under Article 15 are also not applicable.

AI and machine learning

Recently, the European Commission’s Communication on AI acknowledged the impact of GDPR, along with some other EU legislative proposals, such as the Free Flow of Non-Personal Data Regulation, the ePrivacy Regulation, and the Cybersecurity Act, on the development of AI. While the GDPR has restricted the processing of personal data in the AI context, it can produce the trust required for AI acceptance through a fully regulated data market.

GDPR Principles Impacting AI Regulations

A few of the GDPR principles are especially relevant to concerns surrounding privacy, ethics, and AI:

- Accountability. Through the GDPR’s risk-based approach, AI-generated decisions, which have a significant impact and influence over individuals, have been subjected to more complex accountability requirements. Therefore, companies are required to implement a more comprehensive governance structure, including strategies and procedures for evaluating and mitigating risks in the design and development procedures.

- Fairness. The companies are required not to process information in an undisclosed, unexpected, or ill-intentioned manner. Here, the main concern surrounds the emergence of biased data. If biases exist in a company’s structure, there is a high possibility that the same will be replicated when using AI systems for that company. Therefore, it becomes the responsibility of organisations to evaluate the data and its impact on individuals.

- Data minimisation and security According to the GDPR regulations, AI systems must not process personal data beyond the requirements to meet specific goals. Further, security measures are required to avoid any compromise of personal data.

- Transparency. It is vital for the individuals using the system to be aware of the use of AI to process their information. Further, individuals must be provided with meaningful information about the purposes of the processing and the AI logic used. AI systems are required to use clear and consistent logic to help users understand the decision-making abilities of the algorithm.

Issues with AI and GDPR Compliance

AI chatbots like ChatGPT generate text using the technology of large language models (LLMs). The problem is that the LLM model is a deep learning model requiring vast data. This means that the chatbots depend on the knowledge acquired during training to summarise, recognise, predict, and generate solutions to inputs. The issue is the unquantifiable risk of personal data being included in this process, which ideally should have been protected under the GDPR.

In recent years, many organisations have relied on AI technologies to increase efficiency. However, it also becomes challenging for such businesses to comply further with the protection laws. To become compliant with the GDPR regulations, it becomes the responsibility of organisations to explain how data is being used by AI due to the explainability framework. Some specific areas that would be required to be addressed are:

- What data is being processed, and where will it be stored?

- Has the AI model been checked for any possible learned or programmed biases?

- Are there any sector-specific requirements?

A solution for the same may be through synthetic data generation. This includes data that is not based on real people or events but instead uses data that appears to be realistic, and the same can be used as part of the training of the AI models used.

AI and the Right to Be Forgotten

As much as the data boom has led to significant advancements in machine learning (ML) and artificial intelligence (AI), the use of these technologies in real-life scenarios can pose a threat to users’ data privacy. To address this concern, specific regulations, like Article 17(1) of the General Data Protection Regulation (GDPR), grant individuals the right to have their personal data deleted upon request. This is known as the right to be forgotten.

The right to be forgotten allows individuals to ask internet platforms to remove specific personal information about them. This right is considered fundamental for protecting individuals’ privacy, especially when sensitive information is collected from people, necessitating the implementation of monitoring, governance, and audit tools to control such data. AI models are also subject to the Right to Be Forgotten since they learn from data, and individuals should have the option to erase their personal information from AI-based systems.

A significant case that brought attention to the Right to Be Forgotten was in 2010, when Mr. Costeja González filed a complaint against Google and a Spanish newspaper with Spain’s national Data Protection Authority. He noticed that a search for his name on Google displayed a link to a newspaper article about a property sale he had made to settle his debts.

The Spanish data protection authority dismissed the complaint against the newspaper, stating they had a legal obligation to publish the property sale information. However, the complaint against Google was upheld.

Google argued that it was not subject to the EU Data Protection Directive (DPD) since it did not have physical servers in Spain and the data was processed outside the EU. Nevertheless, the European Court of Justice ruled in 2014 that search engine companies like Google are considered data controllers and are subject to the DPD, even if they don’t have a physical presence in the EU. Furthermore, consumers can request search engine companies remove links referencing their personal information.

The General Data Protection Regulation (GDPR) deals with data protection, privacy, and the transfer of personal data outside the EU, and Article 17 establishes the basis for applying the right to be forgotten.

The GDPR, including Article 17 (Right to Erasure), aims to protect the privacy of data subjects, especially in cases where the data is not essential for legitimate purposes. The objective is to ensure that individuals have the right to erase their personal data when it is no longer necessary or when they withdraw their consent. This helps promote transparency and empowers individuals to have more control over their personal information.

However, implementing this right is not a simple process where an individual requests an organisation to delete their data from its databases. ML’s capability to learn from training data raises concerns about whether the learned knowledge might inadvertently memorise personal data from the requester, and this is challenging to ensure from a legal perspective.

What Requirements does the GDPR Impose if an Organisation Transmits Personal Information to an AI as part of a ‘Prompt’?

Data is fed into AI systems to provide information, instructions, or requests called “input data.” Some AI providers, particularly those offering language models, use the term “prompts” to refer to a specific subset of input data that gives instructions to the AI model.

Therefore, companies that use personal information in prompts or input data may be categorised as either controllers or processors depending on their level of control over the AI’s functioning, the personal information included in prompts, the data accessible to the AI, and the conditions for retaining or sharing that personal information. If a company is considered a controller, it must comply with the following GDPR requirements when processing data using AI:

Art. 6: Lawful basis of processing

Controllers, the entities responsible for processing personal data, must have a valid reason or purpose. There are six lawful purposes outlined for processing data. When using AI to process data, there is limited official guidance on which lawful purposes can be used. However, the two most probable lawful purposes are:

Obtaining the consent of the individuals whose personal information will be processed means the individuals consent to the use of their information.

Relying on the legitimate interest of the controller means the controller has a valid and reasonable reason for processing the data that aligns with their interests.

Art. 30(1): Record of processing activities

Controllers are required to maintain detailed records of their data processing activities. These records should include information such as their contact details, the purposes of the processing, categories of individuals and personal data, recipients of the data (including any international transfers), erasure timelines, and descriptions of security measures. Processors must keep records of their processing activities on behalf of controllers, including the contact details of the parties involved, categories of processing, details of data transfers, and descriptions of security measures. These records must be in writing, and the supervisory authority can request access.

However, smaller organisations with fewer than 250 employees are exempt from these requirements unless their data processing poses risks to data subjects’ rights, involves sensitive data, or is not occasional. In such cases, they must also maintain comprehensive records as mandated by the GDPR.

Art. 5(1)(c), (e): Data minimization

Controllers have an obligation to reduce the amount and duration of personal information used and retained in a recognisable form. When using AI to process personal information, controllers should carefully consider ways to minimise the type and quantity of data provided to the AI. They should also limit the AI’s access to such data after its processing is finished, ensuring that data is not retained longer than necessary.

Art. 12–14: Privacy notice

Controllers have an obligation to inform individuals about how their personal information is being processed. If a controller is processing data obtained from public sources, like scraping data from the internet, some supervisory authorities have recommended that the controller inform the public through mass media channels, such as radio, television, or newspapers. This communication should inform the public about the data scraping process and guide them on accessing the company’s privacy notice for more information.

Art. 15: Access rights

Controllers must allow individuals to access any personal information that is held about them. In AI processing, a controller should be ready to respond to an individual’s request for access to their personal information. This includes information that might have been processed by an AI, such as being used as input data or included in a prompt. It also includes data that might be retained within a dataset accessible to an AI, which may be used for further training and refinement.

Art. 16: Correction rights

Controllers must allow individuals to request the correction of any inaccurate personal information. When using AI to process personal data, some supervisory authorities suggest that companies using publicly sourced data (like internet scraping) should provide an online tool for individuals to request and obtain the rectification of their personal data used to train the AI and any data generated by the AI. This requirement may also involve addressing records that include potentially inaccurate personal information.

Art. 17: Erasure rights

Controllers must allow individuals to request the deletion of their personal information if it is no longer needed for the original purposes for which it was collected. When using AI to process personal data, if a controller receives a deletion request, they should check whether the requester’s personal information can be removed from any data that the AI is still retaining. This requirement to erase data may also apply to any logs or records of interactions with the AI system.

Art. 7(3), 21: Right to withdraw consent or object

If a controller relies on individuals’ consent for using AI, the GDPR mandates that they must offer a way for individuals to withdraw their consent. Likewise, if a controller uses AI based on their legitimate interests, they must provide users with the option to object to the ongoing use of their data. However, the right to withdraw consent may not affect the personal information used in the past and is no longer in use.

Art. 35: Data protection impact assessments

The GDPR mandates controllers to perform data protection impact assessments (DPIAs) when utilising new technologies that may pose a significant risk to individuals’ data privacy. Hence, controllers should carefully assess whether conducting a DPIA is necessary when incorporating personal information in prompts or providing it as input data to an AI system.

Art. 44-50: Cross-border data transfers

If personal information in the form of a prompt or input data is going to be sent to an AI hosted outside of the European Economic Area, the controller may have to implement measures to ensure that the data is adequately protected in the destination jurisdiction.

Art. 28: Vendor management

If a controller plans to use a third-party service to host personal information transmitted to an AI system (like a third-party-hosted AI product), the GDPR may necessitate that the third party agree to specific contract provisions mandated for processors.

Conclusion

The AI and GDPR are neutral parties. Some personal data processing in an AI setting may be limited or complicated by the General Data Protection Regulation (GDPR). But as we progress towards a fully regulated data market, it may eventually help generate the confidence necessary for AI acceptance by consumers and governments. After everything is said and done, GDPR and AI will remain inseparable. As more AI- and data-specific legislation appears worldwide, their partnership will strengthen and deepen.

The combination of AI and GDPR can yield huge gains. However, it could negatively affect people’s lives and violate their fundamental rights. Therefore, it is crucial for data protection and information governance officials to grasp the consequences of AI technology and how to use it fairly and lawfully.